Godfathers of AI

Pioneers in the field of AI

5/2/20235 min read

There is no single person who is universally recognized as "The Godfather of AI" since the development of artificial intelligence has been a collaborative effort involving many individuals over several decades.

Some pioneers in the field of AI have made significant contributions to its development and are often referred to as the "fathers" or "founders" of AI. Some of these individuals include:

John McCarthy

Marvin Minsky

Allen Newell and Herbert Simon

Arthur Samuel

Claude Shannon

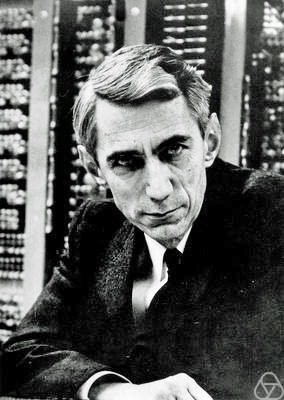

John McCarthy

By "null0" - https://www.flickr.com/photos/null0/272015955/, CC BY-SA 2.0, https://commons.wikimedia.org/w/index.php?curid=1297606

John McCarthy (September 4, 1927 – October 24, 2011) was an American computer scientist and cognitive scientist who is credited with coining the term "artificial intelligence" (AI) in 1955. He was one of the pioneers of the field of AI and made significant contributions to its development over several decades.

McCarthy received his PhD in mathematics from Princeton University in 1951 and began working in the field of AI in the mid-1950s. In addition to coining the term "artificial intelligence," he also developed the Lisp programming language, which became the dominant programming language for AI research in the 1970s and 1980s.

McCarthy also made significant contributions to the development of early AI systems, including the development of formal logic systems and automated reasoning algorithms. He also contributed to the development of AI applications in areas such as natural language processing, robotics, and computer vision.

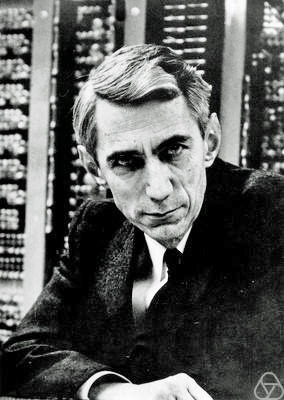

Marvin Minsky

Marvin Minsky (August 9, 1927 – January 24, 2016) was an American cognitive scientist and computer scientist who made significant contributions to the field of artificial intelligence (AI). He is often considered one of the founders of the field of AI, along with John McCarthy, and is known for his work in the areas of machine perception, robotics, and artificial neural networks.

Minsky received his PhD in mathematics from Princeton University in 1954, and he began working in the field of AI in the late 1950s. Along with John McCarthy, he was one of the co-founders of the MIT Artificial Intelligence Laboratory in 1959, which became a hub for AI research and development in the following decades.

Minsky is credited with developing a number of important AI concepts and technologies, including the use of artificial neural networks for image recognition and other pattern recognition tasks. He also contributed to the development of robotics, including the creation of the first mobile robots that could navigate their environments and perform tasks.

In addition to his work in AI and robotics, Minsky was also a prominent figure in the field of cognitive science, where he studied the human mind and how it processes information. He was a professor at MIT for many years and received numerous awards and honors for his contributions to the field of AI.

By The original uploader was Sethwoodworth at English Wikipedia. - Transferred from en.wikipedia to Commons by Mardetanha using CommonsHelper., CC BY 3.0, https://commons.wikimedia.org/w/index.php?curid=7292026

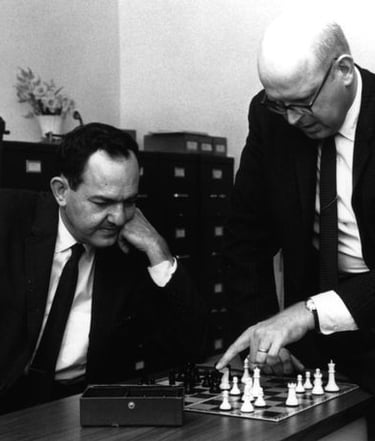

Allen Newell and Herbert Simon

Allen Newell (March 19, 1927 – July 19, 1992) and Herbert Simon (June 15, 1916 – February 9, 2001) were American computer scientists and cognitive psychologists who made significant contributions to the field of artificial intelligence (AI) and cognitive psychology. They are known for their development of the physical symbol systems hypothesis, which proposed that a physical symbol system, such as a computer, could be used to simulate human thought.

Newell and Simon worked together extensively throughout their careers, beginning in the 1950s, and collaborated on a number of important projects. They are perhaps best known for their development of the General Problem Solver, which was one of the first AI programs capable of solving a wide range of problems. They also developed the first AI program capable of proving mathematical theorems.

In addition to their work in AI, Newell and Simon made significant contributions to the field of cognitive psychology, where they studied the human mind and how it processes information. They developed the Information Processing Theory, which proposed that human cognition could be understood as the processing of information through a set of mental processes.

Image Courtesy - Carnegie Mellon University https://en.wikipedia.org/

Arthur Samuel

By Xl2085 - Own work, CC BY-SA 4.0, https://commons.wikimedia.org/w/index.php?curid=63473915

Arthur Samuel (March 5, 1901 – July 29, 1990) was an American pioneer in the field of artificial intelligence (AI) and computer science. He is best known for his work on machine learning, which is the process of enabling machines to learn from data and make decisions based on that learning.

Samuel began his career as a statistician and engineer, working for several companies including IBM and Bell Labs. He became interested in AI in the 1950s, and is credited with developing the first machine learning program. He did this by teaching a computer to play checkers, using an algorithm that allowed the machine to learn from its mistakes and improve its play over time. This work led to the development of the first self-learning machine.

In addition to his work on machine learning, Samuel made other significant contributions to the field of AI. He developed a program called SAM, which was one of the first natural language processing systems capable of understanding and responding to human language. He also worked on computer vision and speech recognition.

Claude Shannon

Claude Shannon (April 30, 1916 – February 24, 2001) was an American mathematician and electrical engineer who is widely regarded as the father of modern digital circuit design theory and information theory. He made significant contributions to the fields of communication theory, cryptography, and digital circuit design.

Shannon is best known for his paper "A Mathematical Theory of Communication," published in 1948, in which he laid out the foundations of modern information theory. In this paper, Shannon introduced the concept of entropy as a measure of information content, and he showed that information could be transmitted over noisy channels with arbitrarily small error rates by using error-correcting codes.

Shannon also made significant contributions to the development of digital circuit design theory. He introduced the concept of the binary digit (bit), which is now the fundamental unit of information in computing. He also developed the notion of Boolean algebra, which is used to design and analyze digital circuits.

In addition to his work in information theory and digital circuit design, Shannon was a pioneer in cryptography, the study of secure communication. He worked on the development of the first digital cipher machine, known as the "unbreakable" one-time pad cipher.

By Jacobs, Konrad - https://opc.mfo.de/detail?photo_id=3807, CC BY-SA 2.0 de, https://commons.wikimedia.org/w/index.php?curid=45380422